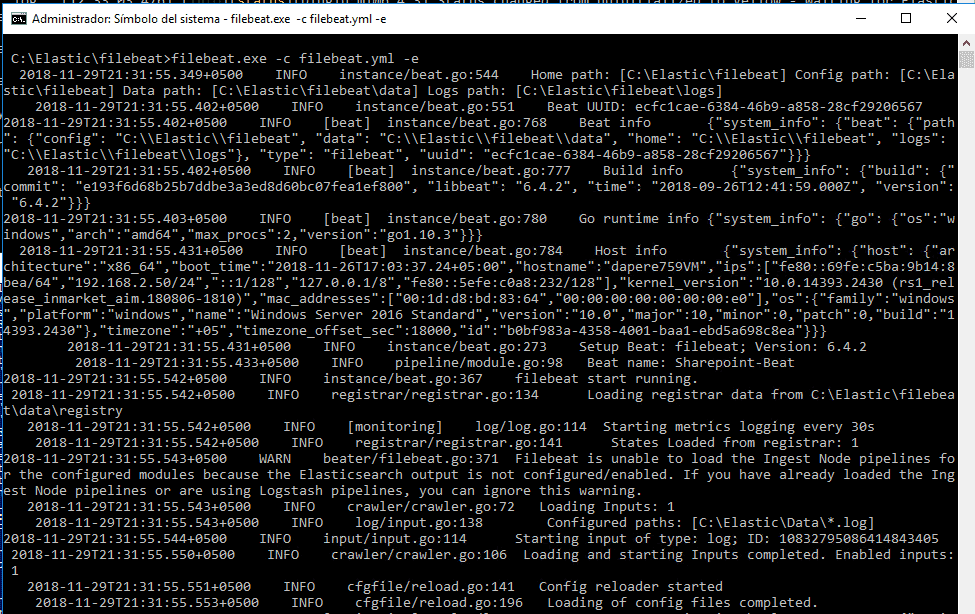

You want to parse the log messages to create specific, named fields from the logs. However you’ll notice that the format of the log messages Now you have a working pipeline that reads log lines from Filebeat. Parsing Web Logs with the Grok Filter Plugin edit Most of the time this will return and empty list. We see also logtash looking for 125 entries in the list with the LRANGE command. This was generated when I called the api. The RPUSH shows when an event was processed from /app/backend/api/tomcat/logs/app.log and then split into multiple log entries in JSON. If you run "redis_cli monitor", you can see what's going on on your redis server: filebeat sending data and logstash asking for it. So the goal of putting Redis in between your event sources and your parsing and processing is to only index/parse as fast as your nodes and database can handle it so you can pull from the event stream instead of having events pushed into your pipeline. Your data might have be reindexed for a whole variety of reasons. #- Redis output -Įlasticsearch (or any search engine) can be an operational nightmare. I used the following filebeat.yml configuration: #= Filebeat prospectors = It monitors files and allows you to specific different outputs such as Elasticsearch, Logstash, Redis, or a file. It is a relatively new component that does what Syslog-ng, Rsyslog, or other lightweight forwarders in proprietary log forwarding stacks do. It cannot, however, in most cases, turn your logs into easy-to-analyze structured log messages using filters for log enhancements. Filebeat configįilebeat is one of the best log file shippers out there today - it’s lightweight, supports SSL and TLS encryption, supports back pressure with a good built-in recovery mechanism, and is extremely reliable.

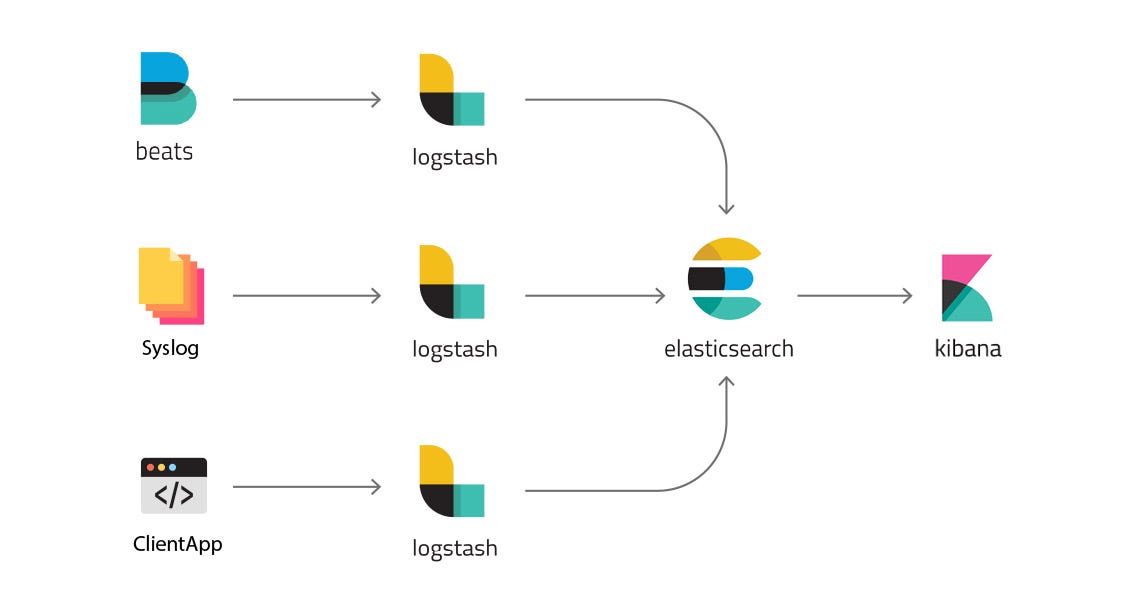

Of course None of this was really necessary and all of this could have been put on a single node but I wanted to test a distributed setup. Multiple Logstash instances that have Redis as their input and ElasticSearch as their output.Redis Server: running in a container, installed from the default package repositories.Filebeats agents: Containers running some apps to generate logs and filebeat.The image above describe what we're trying to achieve. In this post, we'll look at how we can use Redis as buffer in the ELK Stack to ship, analyze, and visualize the data. Today, the in-memory store is used to solve various problems in areas such as real-time messaging, caching, and statistic calculation. Redis, the popular open source in-memory data store, has been used as a persistent on-disk database that supports a variety of data structures such as lists, sets, sorted sets (with range queries), strings, geospatial indexes (with radius queries), bitmaps, hashes, and HyperLogLogs.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed